It's the Process, Not the Person

Blameless culture is the most-cited and least-operationalized idea. We treat it as a leadership doctrine - one that shapes incident response, retention, hiring, and AI agent failure recovery.

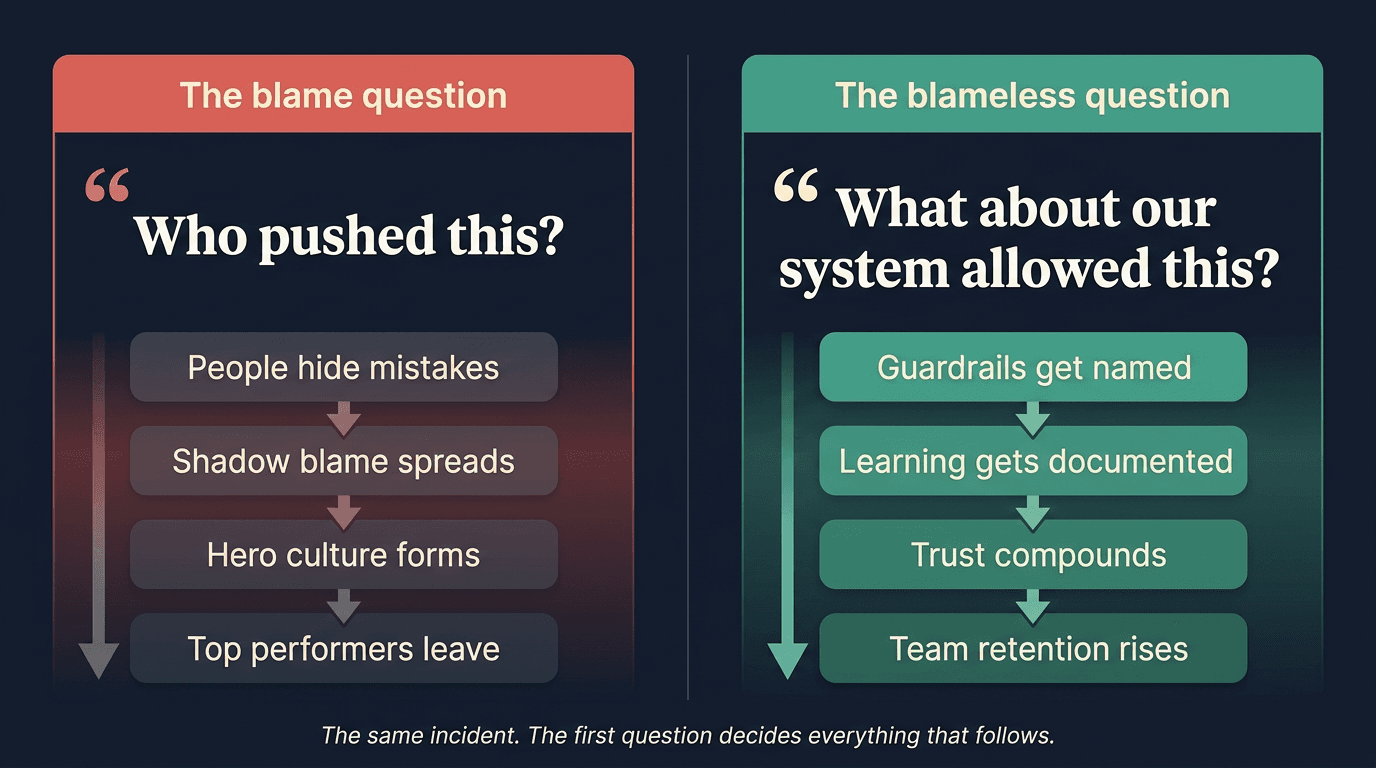

A deploy goes out at 4:47 PM. By 5:02, an unrelated downstream service is throwing 500s for a small but vocal cohort of users. Slack lights up. The on-call pages the engineer who shipped. The first message in the incident channel - posted by a senior leader - is a single question: who pushed this?

That question is the entire culture in five words. Everything that happens for the next ninety minutes - and for the next year - bends around it. People hide. People hedge. The next deploy gets a third reviewer who reads nothing carefully but signs everything. The actual gap, a missing schema check on a contract the two services renegotiated three weeks ago, never gets named.

The team across the hall handled an almost identical incident the same week. Their first message was different: what about our system allowed this to ship? By the end of the hour they had a fix, a guardrail, and a written explanation a junior engineer could read on day one and understand. Same incident class. Two leadership responses. Two completely different cultural trajectories.

The doctrine

We publish this as Blameless Root Cause under Resilient Mindset on our principles page, and we ship it as a Cursor rule into every repository we work in. This post is the long-form operational version: what the doctrine actually means, why it is the highest bar a leader can hold, and how to start practicing it the next time something breaks.

What the doctrine actually means

Blameless does not mean unaccountable. It is a sharper kind of accountability. The accountability moves from the task you executed to the process you own. A senior engineer is not accountable for never pushing a bad change - that is impossible. They are accountable for the testing, review, and rollback systems that make bad changes recoverable. A leader is not accountable for whether their team makes mistakes. They are accountable for whether their team can detect, surface, and learn from mistakes without lying.

The systems-thinking move is to treat every individual error as evidence of a missing guardrail. People act rationally with the information and tools available to them at the time. If a mistake was possible, the system made it possible. Sidney Dekker has been writing this for two decades - his Field Guide to Understanding "Human Error" (3rd ed., 2014) reframes "human error" as "human work in context," and it is the cleanest available statement of the principle. John Allspaw's 2012 Etsy post, Blameless PostMortems and a Just Culture, is where most of modern software engineering first encountered the same idea. Google's Site Reliability Engineering book (2016, Chapter 15) made it operational doctrine for an entire generation of infrastructure teams.

We have all read the slogans. The reason most teams still operate on blame is that the doctrine has three look-alikes that masquerade as practice.

Shadow blame: "I'm not blaming anyone, but..." The sentence ends with a name. The team hears the name.

Retroactive standards: "They should have known." If the standard was not written down before the incident, it is being invented in response to it.

Hero culture: "We just need better people." A system that depends on superhuman people is a system that has not been designed.

Why this is the retention argument

Top performers will not work where they are scapegoated. They have options. They notice the first time a leader's response to an incident lands on a person instead of a system, and they remember it. By the second time, the calculus is already running in the background of every standup.

Blame culture trains the team to do four things, all of them corrosive: hide problems until they are unhideable, minimize severity in the writeup, ship cover-your-ass code that protects the author at the expense of the user, and avoid the hard technical decisions that any later author might second-guess. We have watched all four of these patterns spread through a team in under a quarter, and we have watched them disappear in less than that when the leader's verbal habits change.

The compounding effect is what makes this a strategic question and not a tone question. Amy Edmondson's 1999 paper in Administrative Science Quarterly on psychological safety and team learning, and her 2018 book The Fearless Organization, established that psychological safety is the prerequisite for team learning and innovation. Google's Project Aristotle (2015) confirmed it at scale: psychological safety was the single largest predictor of team performance across hundreds of their teams. Every other factor - dependability, structure, meaning, impact - sat downstream of it.

The counterintuitive frame is the one most leaders miss. Blameless culture is a higher bar, not a lower one. It does not soften accountability. It moves accountability up the stack from "did you screw up" to "did you build the system that made the screw-up impossible." That is the bar a high performant organization holds, and it is the bar that retains the people who can clear it.

The hiring, firing, and promotion implications

A leadership doctrine that does not show up in personnel decisions is not a doctrine. It is a poster. The doctrine shows up in three places.

Hiring

Screen for systems thinking. Ask every candidate about a project that went badly. Listen for the grammar of their answer. Candidates who blame past teammates in interviews are predicting how they will behave on yours. Candidates who say "what about our process let this happen" are showing you the verbal habit you want to spread. This is not a soft signal. It is the strongest single predictor we have found for whether a senior hire will lift the team or pull it down within a quarter.

Firing

The doctrine does not mean nobody ever leaves over performance. It means the leader's first question when someone is struggling is what about our system is failing this person, not is this person broken. Sometimes the answer is that the system is fine and the person is in the wrong role, the wrong stage, or the wrong company. That is still a question of fit, not a question of blame, and it gets handled with respect because the underlying premise was respect. The conversations are harder to have and easier to live with afterward.

Promotion

Promote the people who already use this language without being prompted. They are the people whose teammates copy their grammar within three months. The doctrine spreads through imitation more than instruction, and the leaders you elevate become the compounding force that decides whether your culture keeps the shape you want.

The bad-apples corollary

The doctrine for AI agent failures

When an AI agent ships the wrong code, hallucinates a content type, or invents a tool call that does not exist, the instinct is to say the agent did the wrong thing. It feels like the agent's fault. It is not.

The agent acted on the context, prompt, tools, and evals you gave it. The investigation question is identical to the human one: what about our system allowed this output. The system in this case is the prompt, the retrieval pipeline, the tool registry, the eval harness, the model pin, the permission scope, and the documentation the agent was pointed at. Every one of those is a guardrail you own. When the agent gets it wrong, one of those guardrails was missing or wrong.

We learned this the hard way on a project where an AI agent invented a content type that no model in our stack actually supported. The shipped code looked right, passed local checks, and broke production for a small percentage of users. The first instinct in the room was to talk about the agent's reliability. The doctrine forced us into the more useful conversation: our principle for using existing standards was not codified anywhere the agent could find. The fix was a Cursor rule - Stand on the Shoulders of Giants - that the agent now reads on every relevant task. The same category of failure has not recurred.

This reframe is what separates teams that build trustworthy agents from teams that ship demos and apologize. It is the same principle we have been writing about as Pattern 4 of cross-functional AI-native culture: blameless investigation extends across the whole team, human and AI agent alike.

The discipline

The doctrine is not a one-time speech. It is a daily verbal habit that has to survive the moments when it is hardest to hold. The hardest moment is when someone clearly did mess up. They missed the obvious thing. They ignored the warning. They shipped the change that the playbook says not to ship.

The doctrine still holds. The post-incident conversation in writing, in the channel, and in the retro is still about the missing guardrail. The personal accountability conversation - the "I want to talk about how this landed and what we expect going forward" conversation - happens privately, and it happens separately from the systems conversation. Mixing them collapses both. Sidney Dekker's Just Culture (3rd ed., 2017) is the most useful book we know for the leaders who are trying to hold both lines at once, and the line he draws between blameless learning and personal accountability is the one we operate on.

The other half of the discipline is what you build instead of blame. Add guardrails, not approvals. Sign-offs add friction without adding safety; an extra reviewer who reads nothing carefully is a worse system than no reviewer at all. Automated checks add safety without adding friction. The post-mortem is finished when one new check, one new test, one new alert, or one new piece of documentation has shipped. Anything less is a meeting, not a fix. This is what Don't Leave Broken Windows looks like in incident response: every investigation ends with a repaired window or a documented plan to repair it.

How to start tomorrow

The doctrine is too big to "adopt." It is small enough to start practicing in your next conversation. Pick one of the following and use it the next time the situation arises.

Run the next 5 Whys to a guardrail

Flip the first question in your next 1:1

Audit your retro and post-mortem template

Apply the doctrine to your AI agents

Why this compounds

The same doctrine that fixes today's outage is what retains your best engineers next quarter, what makes your AI agents trustworthy next year, and what spreads through every team a promoted leader of yours ever runs. Blameless root cause is not soft. It is the highest leadership bar we know, and it is the one that compounds across every other decision a high performant organization makes.

We treat it as the foundation of the rest of the leadership stack we publish here, and as a Cursor rule we ship into every repository our team and our AI agents work in. The next post in this series is the practical playbook: the actual artifacts - templates, rule files, and meeting structures - we use to hold the doctrine when it is hardest.

Ship the doctrine, not the slogan

If your team is operationalizing blameless culture, building AI-native engineering practice, or designing the next generation of agent infrastructure, we should talk.