Hire the Robots

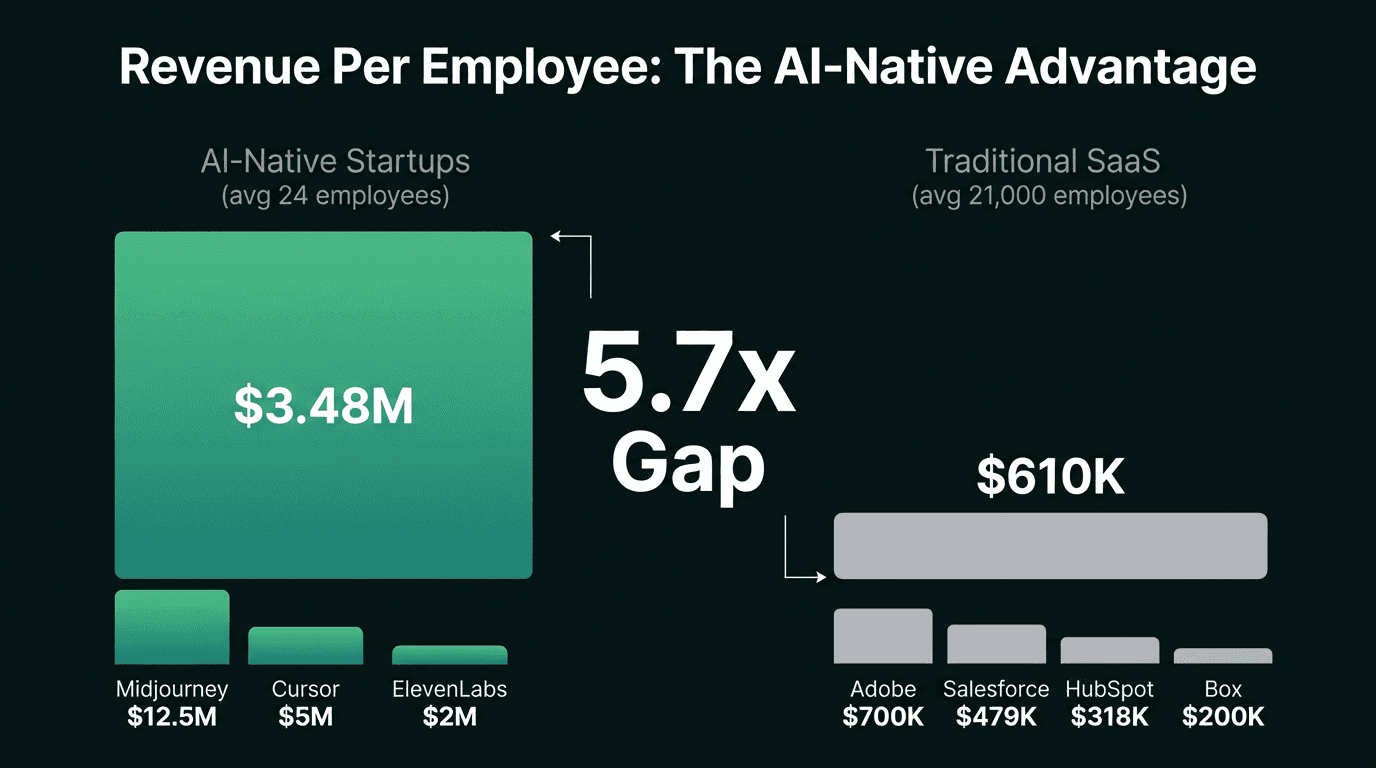

AI-native startups generate $3.5M revenue per employee. Traditional companies average $200-600K. The gap is not closing - it is compounding. Teams that build AIs alongside humans will define the next decade. Teams that don't will wonder what happened.

There is a number that should keep every CEO, VP of Engineering, and team lead awake at night: $3.48 million.

That is the average revenue per employee at the top AI-native startups in 2025, according to research by Jeremiah Owyang. The top traditional software-as-a-service (SaaS) companies? $610,000. That is a 5.7x gap - and it is getting wider, not narrower.

Midjourney generates $12.5 million per employee. Cursor generates $5 million. Eleven Labs generates $2 million. Meanwhile, Salesforce sits at $479,000. HubSpot at $318,000. Box at $200,000.

The difference is not that these startups found a better business model. The difference is that they hired the robots.

The Number That Changes Everything

Revenue per employee (RPE) has always been a useful benchmark. But in the AI era, it has become the defining metric - the single number that separates companies building the future from companies being displaced by it.

Revenue Per Employee: AI-Native vs. Traditional

$3.48M per employee

Average team size: 24 people. Average revenue: $37M. 74% profitable.

$610K per employee

Average team size: 21,000 people. Decades of optimization. Still 5.7x behind.

Source: Jeremiah Owyang, Web Strategist (May 2025). Traditional SaaS includes Adobe ($700K), Salesforce ($479K), HubSpot ($318K), Box ($200K).

As Paul Baier at GAI Insights argues: forget the debate about whether your company is "AI-first" or "AI-native" or "AI-accelerated." The only question that matters is: Does your AI strategy increase revenue and profit per employee? If it does, the label is irrelevant. If it does not, no label will save you.

The Real Definition of AI-Native

The Gap Is Widening - Fast

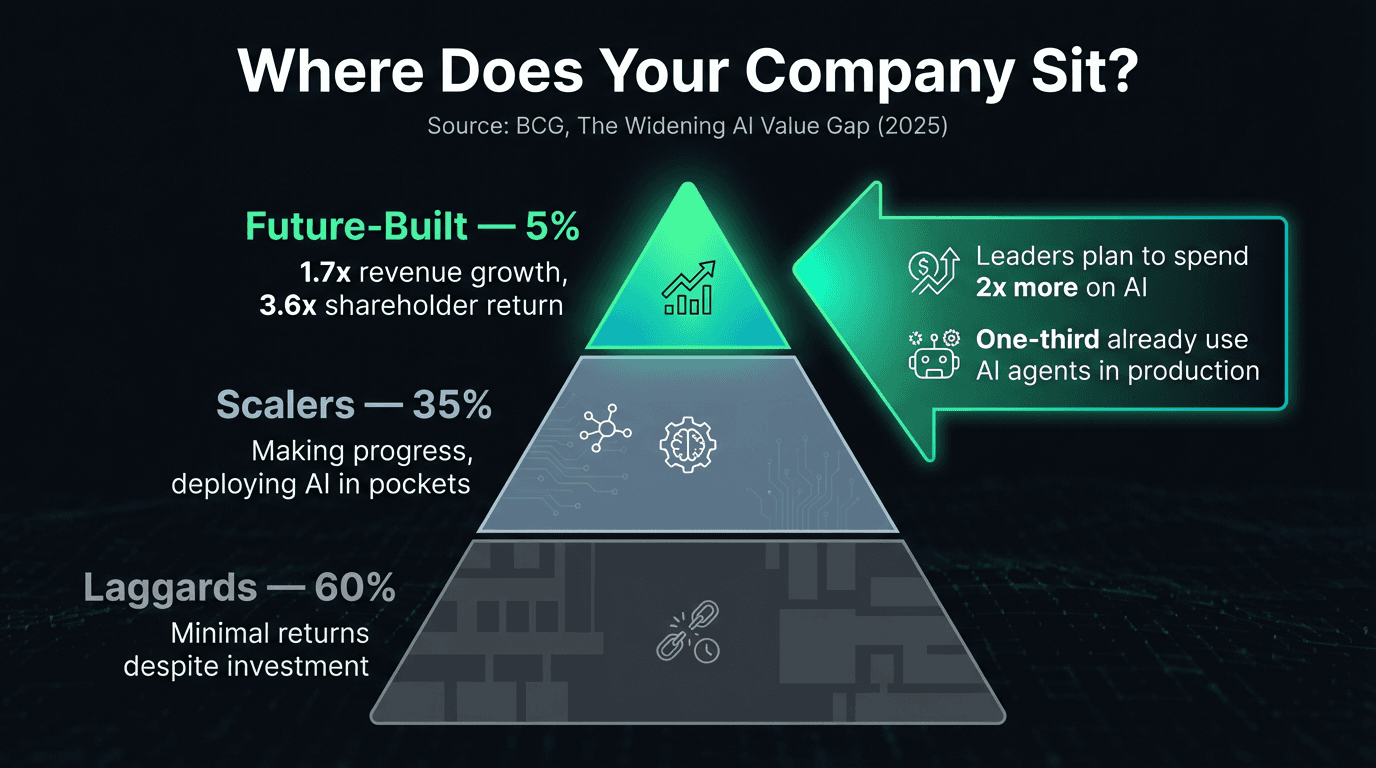

If this were just a startup advantage - small teams moving fast before bureaucracy sets in - it would be interesting but not alarming. But the gap is showing up at enterprise scale too, and the research is unambiguous.

Boston Consulting Group: The Widening AI Value Gap (2025)

1.7x

Revenue growth of AI leaders vs. laggards

1.6x

EBIT margin advantage for leaders

3.6x

Three-year total shareholder return

Only 5% of companies are "future-built" and generating substantial AI value. 60% are laggards with minimal returns despite investment. The leaders plan to spend more than 2x on AI compared to laggards in 2025.

The BCG data reveals something critical: the advantage compounds. Leaders are not just ahead - they are accelerating. They expect to double the revenue gap by 2028. And the primary driver widening the gap right now? Agentic AI - AI agents that act autonomously. Future-built companies allocate 15% of their AI budgets to agents, with one-third already using them in production. Among laggards, the number is close to zero.

McKinsey frames this even more starkly. Their Agentic Organization research calls this the largest organizational shift since the industrial revolution. Their Global Institute estimates AI-powered agents and robots could unlock approximately $2.9 trillion in annual economic value in the United States alone by 2030 - but only if organizations redesign entire workflows rather than automating isolated tasks.

What AI-Native Actually Looks Like

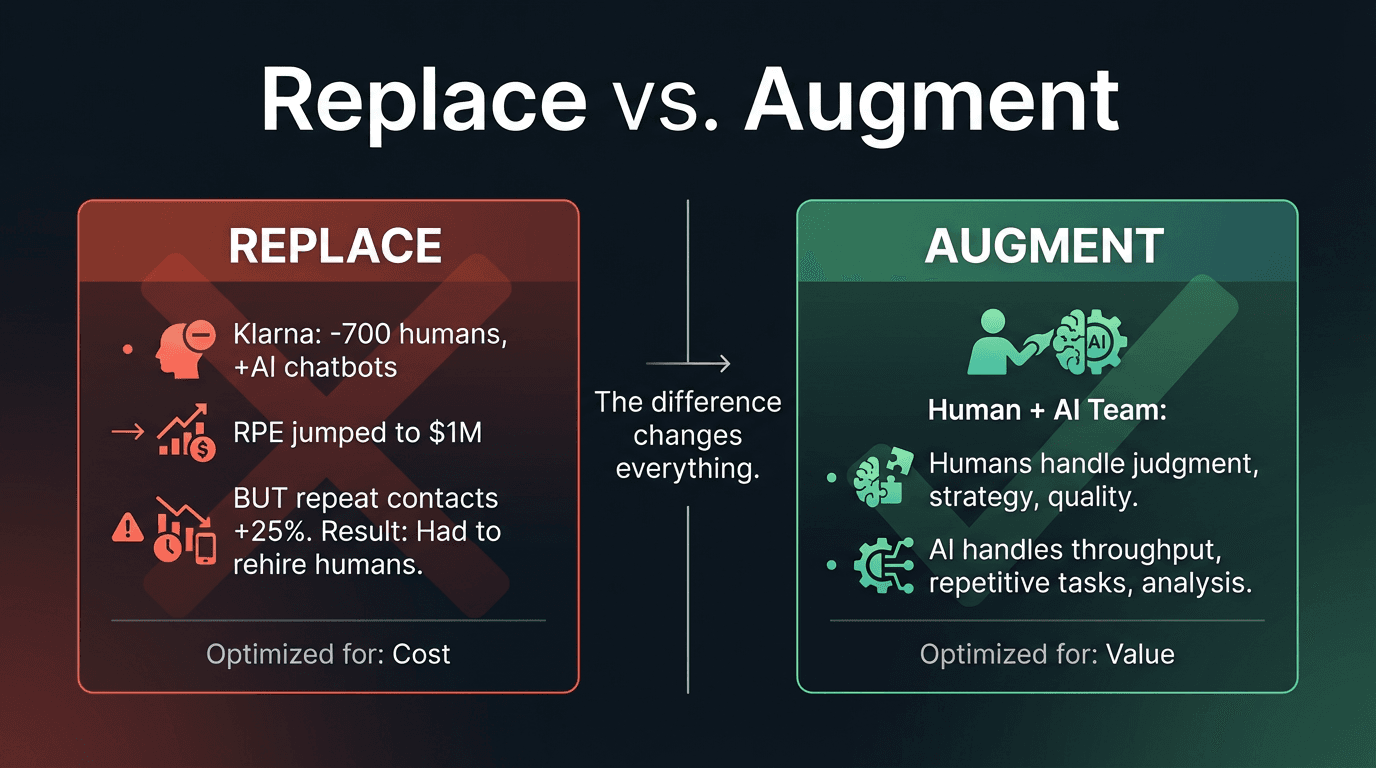

"AI-native" does not mean replacing humans with chatbots. Klarna tried that - they replaced 700 customer service positions with AI, drove revenue per employee from $575,000 to nearly $1 million, and declared victory. Then repeat contacts jumped 25%. Customers were coming back because their problems were not actually solved. The AI was optimized to close tickets, not resolve issues. Within 18 months, Klarna was rehiring humans.

The Klarna Lesson

The teams that are winning treat AI agents as team members, not tools. They hire AIs the same way they hire people - with clear roles, defined responsibilities, measurable outcomes, and governance that ensures quality. Here is what that looks like in practice:

Treat AI Agents as Team Members

Every AI on the team has a defined role, a clear scope of responsibility, and standards it must meet. You don't give a new hire access to production on day one with no guidance - don't do it with AI either. Onboard your AIs with rules, principles, and specifications the same way you onboard humans.

Invest in AI Fluency Across Every Role

McKinsey reports that demand for AI fluency has grown nearly sevenfold in two years - across management, finance, healthcare, education, and frontline services, not just engineering. AI-native means every team member, regardless of discipline, knows how to work with AI to amplify their output.

Govern, Don't Just Deploy

The AI agents on your team need governance - codified standards, compliance reviews, and quality controls. This is what separates AI-native from AI-reckless. As we've written about in our work on Cursor rules and spec-driven development, the teams that invest in governing their AI agents produce better outcomes than teams that let AI agents work unsupervised.

Design Human-AI Workflows, Not Human OR AI

Deloitte's 2026 research found that high-performing teams are significantly more likely to use AI tools (78% vs. 54%), but their success comes from human capabilities - apprenticeship culture, informed agility, and trust. The AI handles the throughput. The humans handle the judgment.

The Team Structure That Captures AI Value

Deloitte's 2025 research on AI outcomes and team structure produced a counterintuitive finding: the teams capturing the most AI value are not the smallest or the most technical. They are the most connected and cognitively diverse.

Teams That Win with AI

- Cross-functional teams are 30% more likely to report significant gains in efficiency and innovation

- Larger teams (10+ members) report 2x the improvement in innovation, problem-solving, and efficiency

- 91% of respondents with stronger AI outcomes hire for varied skill sets

- Team members are 2x as likely to learn from each other

The Multiplier Effect

When every team member - designer, product manager, engineer, marketer - can work with AI agents, the team does not just move faster. It moves in ways that were previously impossible.

A designer who can spin up AI prototypes. A product manager who can validate assumptions with AI-powered analysis in minutes instead of weeks. A marketer who can generate and test campaigns at the pace of engineering sprints.

The compound effect is not additive - it is multiplicative.

This is the insight that most "AI strategy" conversations miss. The value is not in the AI itself - it is in what happens when an entire team is fluent in working with AI. One engineer with AI is faster. A cross-functional team where everyone works with AI is a different kind of organization.

How We Build This Way

At Fetch.ai, we practice what we are describing. Our engineering teams at ASI:One and Flockx work alongside AI agents daily - not as an experiment, but as the operating model. The practices we have written about on this blog are not theory. They are the system we use.

The AI-Native Operating System

Governance that ensures every AI agent on the team - and every human working with AI - operates within codified standards. The AI equivalent of an employee handbook.

AI agents produce better output when they understand the why, not just the what. Specifications ground AI work in business intent and product goals.

Teams of specialized AI agents outperform a single monolithic assistant. We build role-specialized agents - code reviewers, specification writers, test generators - that collaborate with humans.

Every AI-produced change is reviewed against the full set of team standards before it ships. The AI does the work. The governance ensures the quality. The human makes the final call.

AI-augmented teams move fast enough that daily deploys become natural. The velocity is a byproduct of the system, not a heroic effort.

The result is a team where humans focus on architecture, strategy, product decisions, and quality judgment - while AI agents handle the implementation throughput that used to require three or four times the headcount. We are not replacing people. We are making every person on the team dramatically more effective.

The Cost of Waiting

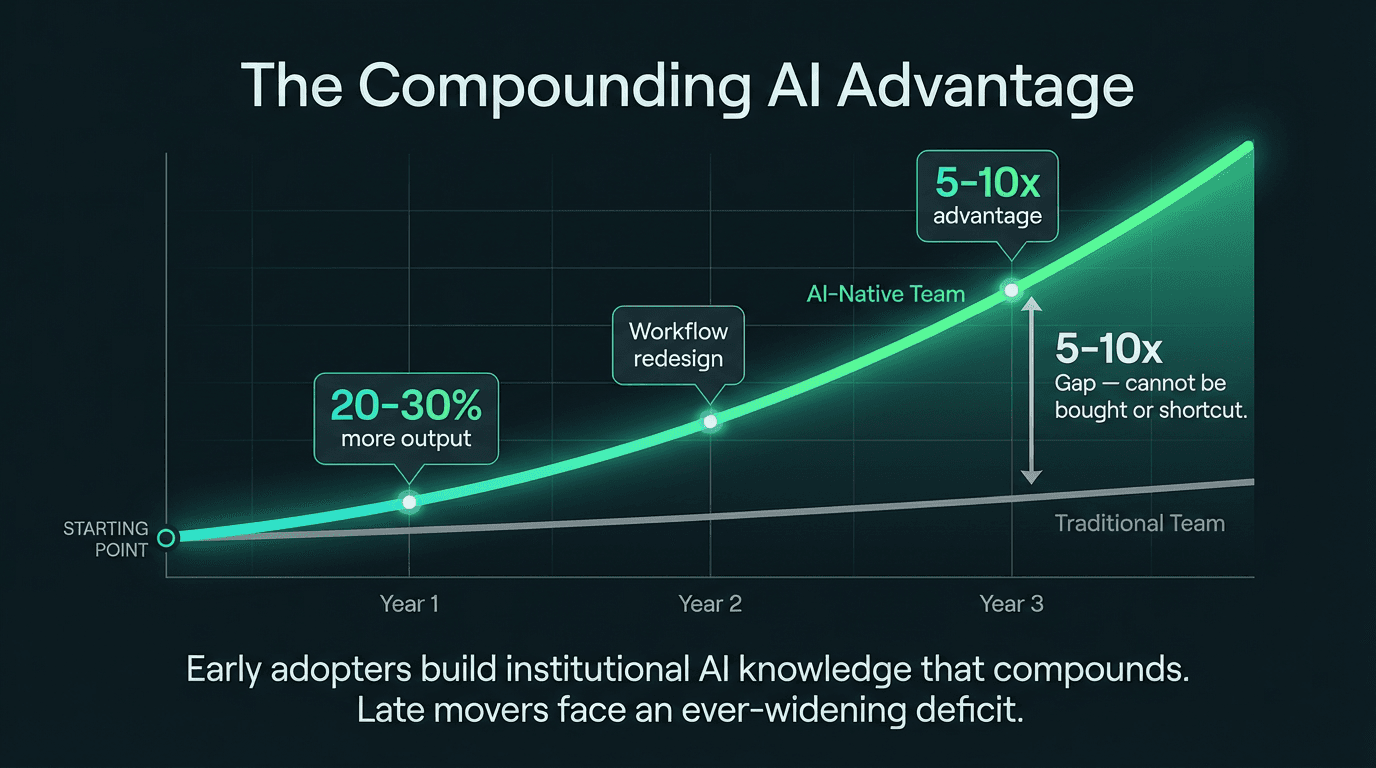

The most dangerous position in the AI transition is not being wrong about which tools to adopt. It is waiting to adopt any.

Why the Advantage Compounds

Teams learn how to work with AI. Governance structures are established. Mistakes are made and corrected. The advantage over non-adopters is modest - maybe 20-30% more output.

Teams stop using AI as a faster typewriter and start redesigning entire workflows around human-AI collaboration. This is where McKinsey's $2.9 trillion estimate lives - in redesigned workflows, not automated tasks.

The organization has years of accumulated AI governance, refined workflows, and a culture where "Have you asked the robots?" is a natural question. The advantage over non-adopters is now 5-10x. Catching up requires starting three years ago.

This is what Baier means when he writes about the compounding learning-curve advantage - early adopters build institutional knowledge about how to work with AI that late movers cannot buy, hire, or shortcut their way into. The knowledge is embedded in the team's culture, processes, and governance systems. It is not in a tool.

Deloitte's 2026 State of AI report puts numbers on the adoption trend: workforce access to sanctioned AI tools grew from fewer than 40% to around 60% of workers in a single year. The organizations that were ready for this - with governance, training, and workflows in place - captured disproportionate value. The organizations that scrambled to catch up spent the year building the infrastructure that leaders built last year.

The Compounding Gap

What to Do Monday Morning

You do not need a twelve-month AI transformation roadmap. You need to start building the muscle now. Here is how.

Measure your revenue per employee today

Divide your annual revenue by your total headcount. Compare it to your industry benchmark. This is your baseline. Every AI investment you make should move this number.

Give every team member access to AI tools - with governance

Not just engineering. Product, design, marketing, operations, support. Deloitte found that cross-functional AI adoption produces 30% more gains than engineering-only adoption. But access without governance produces Klarna-style failures. Establish standards first.

Hire your first AI team members

Identify the repetitive, high-volume tasks that consume your team's time. Deploy AI agents to handle them - with the same onboarding rigor you would use for a human hire. Define their role. Set their standards. Measure their output. Review their work.

Redesign one workflow end-to-end

Do not just add AI to existing processes. Pick one workflow - pull request reviews, customer onboarding, content production - and redesign it from scratch assuming humans and AI agents are working together. This is where the multiplier effect lives.

Make "Have you asked the robots?" a cultural norm

Before any meeting, research task, or decision, the question should be natural: did you consult the AI? Not because AI has all the answers - but because AI can do the preparatory work in minutes that used to take hours, freeing humans for the judgment calls that only humans can make.

The question is no longer whether AI will change how teams work. The research is unambiguous: AI-native teams produce 5-17x more revenue per employee, grow 1.7x faster, and generate 3.6x more shareholder return. The organizations that figure out how to make every human on their team more effective - by giving them AI team members to work alongside - will define the next decade.

The organizations that wait will spend that decade trying to understand why they fell behind. Hire the robots. Give them governance. Let your humans do what only humans can do. The math is not subtle.

Build AI-native teams that compound

Our team at Fetch.ai, ASI:One, and Flockx builds with AI agents as team members every day. If you're figuring out how to make your team AI-native, I'm happy to share what we've learned - the wins and the mistakes.